Predicting League One: How the BTP Model Works

🤖 BTP Machine Learning Series

League One returns after the international break on 28 March. Before it does, this is the story of how BeyondThePrem extended its ML prediction model to League One — and what the numbers told us about a division with more statistical structure than the Championship above it.

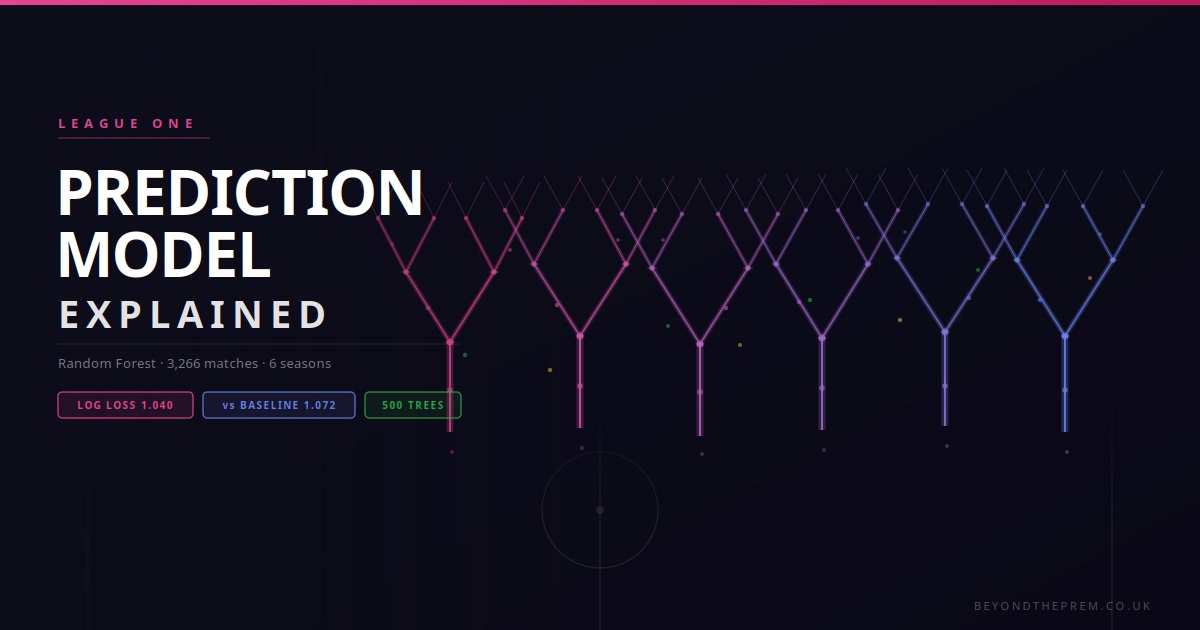

The BTP ML system launched for the Championship in GW40 of 2025/26. From GW40 of the same week, it also goes live for League One. The two models were built using identical methodology — same rolling form features, same season split, same four-model comparison. But the League One results were notably different in one key way: the improvement over baseline is larger, and a different algorithm wins. This post explains why.

For background on the shared methodology — log loss, rolling windows, the COVID flag — see the Championship explainer. This post covers the League One-specific findings and the most interesting difference: why Random Forest beat Logistic Regression here when it didn’t in the Championship.

📊 The Data

📁 League One Dataset

The BTP database holds every League One result since 2019/20. After filtering to finished matches only and excluding the current 2025/26 season (live data — never used for training), the modelling dataset covers:

Matches

3,266

finished results

Seasons

6

2019/20 – 2024/25

Nulls dropped

0

clean dataset

The Championship dataset dropped 72 rows — early-season matches where teams had fewer than five prior games. League One dropped zero. This is because the 2019/20 season, which was truncated by COVID and restarted in biosecure bubbles, produced only 405 League One matches rather than a full 552+. That early-season gap meant teams already had sufficient prior history when the season resumed in the summer of 2020. The result: a perfectly clean feature dataset with no imputation needed.

COVID flag: The 2019/20 and 2020/21 seasons were played entirely or partly behind closed doors. A crowd_present feature (0 or 1) was added to capture this structural difference — the same flag used in the Championship model.

No xG in League One — a simpler decision

xG data does not exist for League One in the BTP database. The Championship explainer described a difficult trade-off: theoretically superior xG features vs. the data volume advantage of six seasons of goals data. In League One there is no trade-off — the decision makes itself. The model uses goals-based rolling features exclusively, and we lose no sleep about it.

This is actually an advantage in one respect: no complex xG parallel model to maintain, no partial coverage to manage, and no model versioning as xG data accumulates. When xG does become available for League One, it will be incorporated and the model retrained — at which point the same head-to-head comparison from the Championship can be run here too.

Outcome distribution

⚙️ How the Model Works

Feature Engineering

The League One feature set is identical to the Championship model — same rolling windows, same position bands, same season ordinal and crowd flag. The core principle is unchanged: the model never sees the match it is predicting. Every feature is calculated from prior matches only, and rolling windows reset at the start of each season so a team’s 2024/25 form never bleeds into their 2023/24 window.

Rolling form windows

For each team, the model calculates goals scored, goals conceded, and points earned across the last 5 games and last 10 games — home and away combined. This gives 12 rolling features per match (6 per team). The 10-game window consistently outranked the 5-game window in importance; form over a longer spell is more predictive than recent volatility. A team that won their last game after losing five in a row looks weaker in the 10-game window — and that broader context is what matters.

League position at kickoff

Both teams’ positions in the League One table at the time of the match — computed from all completed results up to that point in the season. A team in the top 6 (promotion zone), mid-table (7th–20th), or bottom four (relegation zone) gets a position band flag, one-hot encoded. In League One, the gap between position 1 and position 24 tends to be larger in goals-per-game terms than in the Championship — and that gap is what makes position the dominant feature in this model.

Top Feature Importances

From the production Random Forest model, the top predictors by mean feature importance across all three outcome classes:

League position dominates — more so than in the Championship model, where it was also the top feature but the gap between position and rolling form was narrower. League One’s wider spread in team quality makes raw table position a stronger predictor of match outcome.

🌳 Why Random Forest — Not Logistic Regression

The Most Interesting Difference from the Championship

In the Championship model, Logistic Regression won — just. Log loss 1.064 vs Random Forest’s 1.066. A margin of 0.002. Both models were genuinely close, with LR edging it on probability calibration.

In League One, the result is cleaner: Random Forest wins more convincingly.

Championship

Best model: Logistic Regression

1.064

RF margin: +0.002 worse

Very close — almost a coin flip between the two

League One

Best model: Random Forest

1.040

LR margin: +0.008 worse

Clearer winner — RF captures non-linear structure

The likely explanation lies in the structural character of each division. The Championship is broadly competitive — most sides are capable of beating most others on a given day. The gap between position 1 and position 24 in goals-for per game is relatively compressed. In this environment, a linear model like logistic regression handles the relationships well because the relationships are approximately linear.

League One has more extremes. Sides like Lincoln City (74 goals, 40 GD at GW39) coexist with clubs like Port Vale (29 goals, −21 GD) in a way that creates sharper, more non-linear decision boundaries. When the top team meets the bottom team, it’s not just “slightly more likely home win” — the gap in features is so large that a model capable of learning thresholds and interactions handles it better than a linear one. That is what Random Forest does.

Practical implication: League One’s best model (log loss 1.040) beats the Championship’s best model (log loss 1.064). This means League One results are, in statistical terms, slightly more predictable. Not easy to predict — but the underlying signal from league position and form is stronger because the gaps between sides are larger on average.

🌳 What is a Random Forest?

A Random Forest is a machine learning algorithm that builds hundreds of individual decision trees — each one trained on a slightly different random sample of the data — and combines their votes to make a final prediction. Think of it like asking 500 independent analysts to each study a subset of League One history and cast a vote on the likely outcome. The majority view wins.

The “random” part is deliberate. By introducing randomness into which data each tree sees and which features it considers, the model avoids over-relying on any single pattern that might just be noise. The result is a more robust prediction than any one tree would produce alone.

For League One prediction, Random Forest outperformed both Logistic Regression and XGBoost on the 2024/25 test season — making it the model behind the probability estimates you see here.

🌳 How Random Forest Works

500 decision trees, majority vote

A single decision tree learns rules like “if home position ≤ 6 AND away points last 10 < 12, predict home win.” They’re fast but brittle — small changes in data cause big changes in the tree. A Random Forest builds 500 such trees, each trained on a random sample of the data and a random subset of features. To make a prediction, all 500 trees vote, and the majority wins. This “ensemble” approach is far more stable and handles non-linear interactions naturally — which is exactly what League One’s wider quality spread requires.

Why it outperforms logistic regression here

Logistic regression assumes that the probability of a home win changes smoothly and linearly with each feature. Random Forest makes no such assumption — it can learn that home advantage matters a lot when positions are close but much less when the away team is 20 places above the home team. In a division with genuine quality extremes, that threshold-learning ability is worth 0.008 in log loss.

The trade-off: interpretability

The downside of Random Forest is that you can’t easily read off why it made a specific prediction. Logistic regression gives you a coefficient per feature — you can see that league position contributes this much and form contributes that much. With 500 trees averaging together, you get feature importance across the whole dataset but not a clean explanation for any single fixture. The BTP system uses feature importance for the overall model description but can’t provide “this fixture was predicted home win because X” at the individual level.

📈 How Did the Models Perform?

📊 Model Comparison — 2024/25 Test Season (557 matches)

All four models were evaluated on 2024/25, a season none of them had seen during training (train set: 2019/20–2023/24, 2,633 matches). The naive baseline predicts the training-set class distribution for every match — the floor that any model must beat to add value.

Log loss measures how well a model’s predicted probabilities match what actually happened. Unlike accuracy — which just asks “did you pick the right winner?” — log loss rewards confident correct predictions and punishes confident wrong ones. A model that says “70% home win” and the home team wins scores better than one that says “40% home win” for the same result.

Lower is better. A completely uninformed model assigning equal probability to all outcomes (33%/33%/33%) would score around 1.099. Our baseline — which uses the actual historical frequency of each outcome — scores 1.072. The Random Forest model scores 1.040, a clear and consistent improvement across the full 557-match test set.

For context, even sophisticated commercial football models rarely achieve log loss below 1.00 on three-outcome prediction. The irreducible randomness in football is real — but League One’s larger quality gaps give the model more signal to work with than the Championship.

Lower log loss = better probability calibration. Random Forest wins by a clear margin. XGBoost barely beats the baseline and is not used in production.

Honest framing: Random Forest’s 0.032 improvement over the baseline is stronger than the Championship model’s 0.009 improvement. That said, the absolute log loss values are similar — both models are operating in the same ballpark of “modest but real improvement over chance.” League One is not dramatically more predictable than the Championship. It’s slightly more predictable, for structural reasons. That’s a genuine finding, not a dramatic one.

⚠️ What the Model Can and Can’t Do

🔍 Limitations

Draws are almost unpredictable

Across all models, draw recall is near zero — the model rarely predicts a draw even though draws make up ~25% of results. This is the fundamental problem in football prediction: draws look almost identical to close home or away wins before kickoff. No model based on historical team statistics solves this reliably. When the model assigns 35% to a draw, as it does for Exeter vs Orient in GW40, it is not really “predicting” a draw — it is acknowledging uncertainty.

No squad information

The model knows nothing about injuries, suspensions, or rotation. A team rated as strong favourites based on their last 10 games will carry that rating even if their top scorer is out. This is a known and meaningful gap — particularly in League One, where individual player impact is often larger than in the Championship.

No xG — goals only

The League One model relies entirely on goals scored, goals conceded, and points earned — no expected goals data exists for this division in the BTP database. This means the model cannot distinguish between a team that is scoring from on-target shots with high conversion rates and a team creating genuinely better chances but running cold. When xG becomes available for League One, it will be incorporated and the model retrained. The Championship’s xG experiment showed this will likely need several seasons of coverage to pay off in test performance.

It will improve over time

As 2025/26 completes, the training volume grows from 2,633 to ~3,190 rows. Each additional season of data refines the model’s understanding of what League One-level form looks like. If xG data becomes available, the model will be extended in the same way the Championship xG model was built. The methodology is documented and the pipeline is automated — retraining is a single script run at the end of each season.

🔴 Live Predictions

⚡ How It Works on the Site

Each gameweek, before fixtures kick off, a Python script connects to the BTP database and computes rolling form and league position for every upcoming League One fixture. Probabilities are generated by the Random Forest model and written to the database alongside the Championship predictions. The same WordPress shortcode — — renders them as a horizontal bar chart on the page.

The League One predictions are stored in the same table as Championship predictions, distinguished by model_version='leagueone_goals_v1'. Here’s an example from GW40 (28 March 2026) — Exeter City hosting Leyton Orient:

Match Prediction

Exeter City vs Leyton Orient — GW40, 28 March 2026. Orient 17th (48 pts), Exeter 20th (42 pts) — a near three-way split at 29% / 35% / 36%.

The model’s most uncertain fixture of GW40. A near three-way split reflects two sides whose recent form and table position are genuinely difficult to separate statistically. The eye test favours Orient — who arrive on a four-game winning run — but the model is essentially saying this is a coin flip. 28 predictions were generated for GW40 in total, covering all League One fixtures through 4 April.

These are probability estimates, not certainties. A 36% probability for Orient means the model thinks they win roughly 1 time in 3 — not that they will definitely win. Exeter winning would not be a surprise at 29%. The model assigns draw as the most likely single outcome at 35%. Football doesn’t do certainties, and neither does this model.

📅 Going Forward

📌 Predictions Every Gameweek

From GW40 onwards, ML probability predictions will be embedded in every BTP League One gameweek preview alongside the Championship predictions. They appear in a dedicated ML Predictions tab alongside the usual form tables, head-to-head data, and fixture analysis — another data layer to inform how you think about each game.

The model will be retrained at the end of 2025/26 to incorporate the full current season’s data. If xG data becomes available for League One, a parallel xG model will be built using the same methodology documented in the Championship explainer. The methodology will always be documented here.

The League One prediction system is the companion piece to the Championship model — built on the same principles, trained on the same timeframe, but producing a notably stronger result. Both are built entirely on BTP’s own database. If you have questions about the methodology or want to discuss specific numbers, the technical details are available on request.

Model: Random Forest (scikit-learn, 500 estimators). Training data: League One 2019/20–2023/24 (2,633 matches). Test: 2024/25 (557 matches). Predictions for 2025/26 use completed fixtures up to point of generation. Not financial advice.